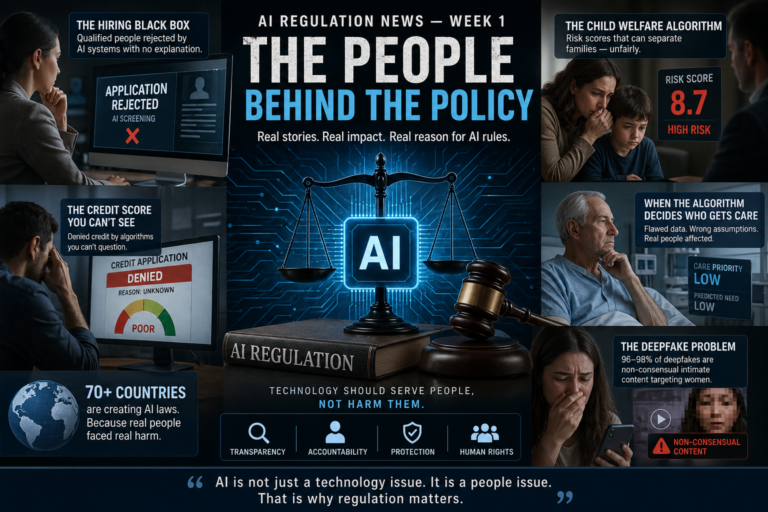

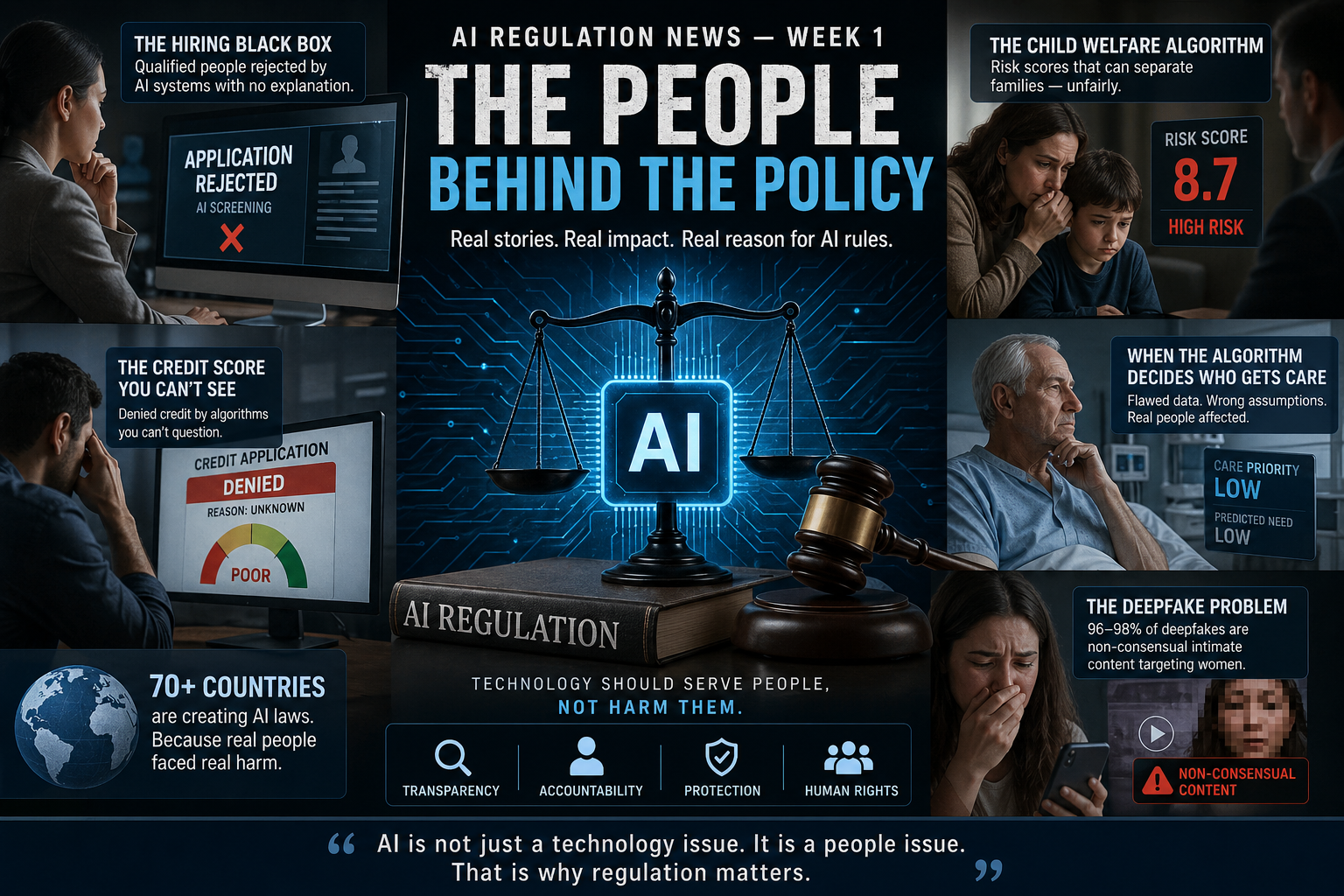

“The Algorithm Said No” Real Stories Behind the Push for AI Rules

Most coverage of AI regulation reads like a legal textbook.

Risk frameworks. Compliance timelines. Annex this, Article that.

And most people stop reading.

But there is something important hidden behind all that language:

Every major AI regulation being written today exists because something went wrong in the real world.

A person was rejected for a job by software that never explained why.

A person was denied credit by a system they could not question.

A family decision was influenced by a risk score no one could fully see.

These are not rare hypotheticals. They are the kinds of cases repeatedly referenced by researchers, journalists, and policymakers explaining why AI laws are accelerating globally.

More than 70 countries now have AI policies or regulatory frameworks. That level of alignment is not accidental. It reflects a shared realization: AI systems are already shaping real-world outcomes, often before rules have caught up.

This week, instead of legal theory, we focus on what actually triggered the shift.

The Hiring Black Box

You apply for a job. Your CV is strong. Your experience fits. You are qualified.

A few days later, an automated email arrives.

“After careful consideration, we regret to inform you…”

You ask for feedback. None comes. You ask who reviewed your application. Nobody answers because nobody did. An automated system filtered your CV before a human ever saw it.

This is no longer unusual.

Surveys show AI tools are now used in recruiting by a majority of large organizations in some capacity. CV screening, candidate ranking, and interview analysis have become standard parts of hiring pipelines.

The goal is efficiency. The risk is replication.

AI systems trained on historical hiring data often inherit historical patterns. If past hiring favored certain groups, the system may learn that pattern as “success,” even if it reflects bias.

That does not require intent. It only requires data.

This is why employment-related AI is classified as high-risk under the EU AI Act. Systems that influence access to jobs must meet strict requirements: bias testing, documentation, transparency, and human oversight.

The issue is not automation itself. It is the absence of visibility.

When a system decides who gets considered — and no one can explain why — the decision becomes difficult to challenge and almost impossible to audit externally.

The Credit Score You Cannot See

Credit decisions shape everyday life.

Housing. Loans. Interest rates. In some cases, employment.

Now those decisions are increasingly influenced by AI.

Modern lending systems analyze far more than traditional credit history. They may include behavioral signals such as spending patterns, payment timing, or account activity to predict risk.

At first glance, this seems like progress. More data should improve accuracy.

But there is a problem: opacity.

When an applicant is rejected, they are rarely told which factors mattered most. They cannot verify whether the system made an error. They cannot easily detect whether neutral signals acted as proxies for protected traits.

The issue is not that these systems always fail. It is that people cannot reliably understand or challenge them when they do.

That is why credit scoring appears as a high-risk category under the EU AI Act. It is also why U.S. states are introducing laws requiring explainability and accountability in automated decision-making.

Across jurisdictions, the principle is becoming clearer:

If a system decides access to financial opportunity, the decision cannot remain a black box.

The Child Welfare Algorithm That Got It Wrong

In Allegheny County, Pennsylvania, a predictive system has been used to help prioritize child welfare cases.

The intent was practical: help caseworkers manage overwhelming workloads.

The reality has been more complex.

The system has been studied extensively, with mixed findings. Some audits identified persistent disparities, while local authorities have updated the model over time.

A key concern has been how the system interprets data.

Families with greater interaction with public services — such as welfare or housing support — generate more data. That visibility can be interpreted as higher risk, even when it reflects economic conditions rather than actual harm.

The distinction matters.

If poverty increases visibility, and visibility increases risk scores, then investigations can become unevenly distributed. Those investigations produce more data, which can reinforce the same pattern.

The result is not necessarily intentional bias. It is a structural feedback loop.

This is one reason child welfare systems are consistently treated as high-risk in AI regulation. Decisions affecting families require transparency, oversight, and the ability to review outcomes.

When algorithms influence decisions at that level, the margin for error becomes a policy issue, not just a technical one.

Healthcare: When the Algorithm Decides Who Gets Care

Healthcare systems increasingly use AI to identify patients who may need additional support.

In one widely cited case, an algorithm used cost as a proxy for medical need.

The logic was simple: higher cost implies higher need.

But the assumption did not hold.

Healthcare access is uneven. Some groups receive less care due to systemic barriers. Lower spending, in those cases, reflects limited access rather than lower need.

The model interpreted lower cost as lower priority.

The outcome was measurable: certain patients were less likely to receive additional care, even when their conditions were comparable.

No malicious intent. No explicit discrimination.

Just a flawed proxy.

This is a recurring lesson in AI systems:

If the data reflects inequality, the model can reproduce it — even when the objective appears neutral.

That is why healthcare AI is treated as high-risk across multiple jurisdictions. Systems affecting patient outcomes must be explainable, testable, and subject to oversight.

Because in healthcare, small errors scale quickly.

The Deepfake Problem

She had never agreed to be part of the video.

She had never recorded anything resembling it.

She only discovered it after someone else recognized her face online.

The content had been generated using AI.

Her likeness was mapped onto another person’s body without consent.

Removing it was not immediate. Automated systems did not catch it quickly. Reporting required persistence. Verification was difficult.

The harm, however, was immediate.

And increasingly common.

Research shows that the overwhelming majority often estimated between 96% and 98% of deepfake content online consists of non-consensual intimate imagery, disproportionately targeting women.

Growth rates have been extreme. In some tracked categories, volumes have increased by thousands of percent within a few years.

This is why regulation has accelerated.

- The EU AI Act requires labeling of AI-generated content

- Deepfakes must be identifiable under transparency rules

- The UK has criminalized certain forms of non-consensual synthetic media

- U.S. states have introduced targeted legislation

The pattern is clear.

This is not only a technology issue. It is a consent issue.

The Pattern Behind All of These Stories

Different sectors. Different systems. Different outcomes.

But the same underlying structure:

Decisions with real consequences, made by systems that are difficult to see, explain, or challenge.

That is the core issue driving AI regulation.

At its simplest, the concern is not whether AI exists.

It is whether people affected by it have:

- visibility into decisions

- a way to question outcomes

- protection against systematic error

That is what “fundamental rights” means in practice.

Not theory.

Process.

What This Means Right Now

If you deploy AI:

You need to understand how it works, how it was trained, and how it behaves across different groups.

If you cannot explain those things, regulation will eventually require that you can.

If you use AI services:

You need to ask vendors direct questions about bias testing, data sources, and error handling.

If answers are unclear, that is a signal.

If you are a user:

The rights being introduced transparency, explanation, and challenge mechanisms are designed for exactly these situations.

The Bigger Frame

The EU AI Act. U.S. state laws. China’s regulatory model. South Korea’s framework.

Different approaches.

Same trigger.

Real-world harm exposed gaps in oversight.

Governments are now trying to close those gaps — each in their own way.

That is the story beneath the policy language.