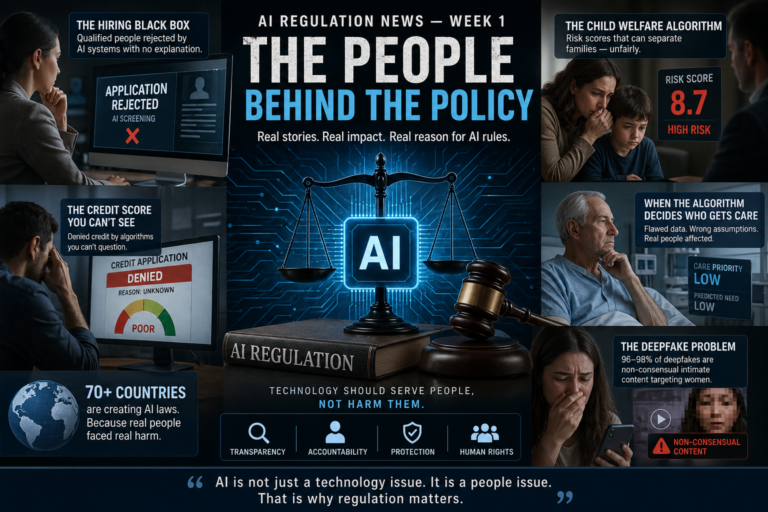

Here’s the honest truth about AI regulation right now.

Nobody fully agrees on what to do.

Not governments. Not companies. Not lawyers. Not even the people building AI systems

themselves.

What you have instead is a global race not just to build powerful AI, but to write the rules that

govern it. And in 2026, that race is moving faster than most people realize.

The EU is enforcing the world’s first comprehensive AI law, with the biggest deadline yet

approaching in August. The U.S. federal government wants to sweep away the patchwork of state

laws and create one national standard. States are passing record numbers of AI bills anyway.

China is running its own system entirely. And more than 70 countries around the world have now

issued at least one AI policy, strategy, or regulation.

The world is trying to govern a technology that is evolving faster than any law can keep up with.

Here’s what’s actually happening in plain language.

The Big Picture: Three Very Different Visions for AI Governance

Before getting into the specific news, it helps to understand the fundamental divide.

Right now, the world has fractured into three structurally different approaches to governing AI:

The EU’s approach: Rights-based, risk-based, comprehensive. Mandatory law covering all

sectors. Extraterritorial reach if your AI affects EU users, you comply, regardless of where you’re

based. Highest fines globally. Up to 7% of global annual turnover for serious violations.

The U.S. approach: Innovation-first, sector-specific. No federal AI law. The Trump

administration revoked Biden-era AI safety requirements in January 2025 and has been pushing to

preempt state rules. But states are filling the vacuum with their own legislation faster than

Washington can act.

China’s approach: State-directed control. Mandatory content regulation, pre-launch security

assessments, algorithmic filing requirements enforced by the Cyberspace Administration of China.

AI as an instrument of state governance, not individual rights protection.

These are not just different policy preferences. They are fundamentally incompatible visions of what

AI is for and who it serves.

And every company building or deploying AI in multiple markets has to navigate all three

simultaneously.

The EU AI Act: The August 2026 Deadline Is Here

The most consequential AI regulation in the world right now is the EU AI Act — and its biggest

enforcement deadline arrives on August 2, 2026.

The Act came into force in August 2024. It’s been rolling out in phases since then. The first

prohibitions banning uses like government social scoring and real-time biometric identification in

public spaces took effect in February 2025. Rules for general-purpose AI models began in

August 2025.

August 2, 2026 is the date most businesses should be paying close attention to.

This is when requirements for high-risk AI systems become fully enforceable. That covers a

specific list of eight sectors: biometrics, critical infrastructure, education, employment and worker

management, access to essential services (credit scoring, insurance), law enforcement, migration

and border control, and administration of justice.

What does “high-risk” compliance actually require?

It means maintaining a risk management system across the AI system’s entire lifecycle. It means

technical documentation. Conformity assessments. CE marking. Registration in the EU’s AI

database. Mechanisms for human oversight. Data governance standards.

It means, in practical terms, that your AI-powered hiring tool, your automated credit scoring system,

or your fraud detection algorithm now has to be documented, tested, assessed, and registered —

before it operates in the EU market.

The penalties for non-compliance are real: up to €35 million or 7% of global annual turnover for

prohibited practices. Up to €15 million or 3% for high-risk violations.

The “Digital Omnibus” Delay But Don’t Count On It

There is a twist.

The European Commission proposed a “Digital Omnibus” package in late 2025 that could delay the

Annex III high-risk requirements until December 2027. Trilogue negotiations are underway, and as

of March 2026 the European Parliament had adopted its position with 569 votes in favor.

But here’s the practical advice from compliance lawyers: don’t plan around the delay. Treat August

2, 2026 as the binding deadline. If the extension materializes, you’ll be ahead. If it doesn’t, you

won’t be scrambling.

Every member state must also have at least one AI regulatory sandbox operational by August 2,

2026 a controlled environment where companies can test and develop AI under regulatory

supervision without the full compliance burden applying immediately. That’s a meaningful

concession to innovation, built into the same law that imposes the strictest requirements.

The United States: A Messy, Evolving Patchwork

If the EU’s approach is systematic perhaps too systematic for companies who feel the

compliance burden is enormous the U.S. situation is the opposite: fragmented, politically

contested, and moving in multiple directions at once.

The White House Framework: Federal Preemption as Priority

On March 20, 2026, the Trump administration released its National Policy Framework for Artificial

Intelligence a significant document even though it doesn’t create binding legal obligations on its

own.

The Framework makes clear the administration’s direction: one national standard, minimal burden,

federal preemption of state laws that “impose undue burdens,” and notably an explicit

recommendation against creating any new federal rulemaking body for AI.

The seven pillars of the Framework cover: child protection, AI infrastructure and small business

support, intellectual property, censorship and free speech, enabling innovation, workforce

preparation, and preemption of state AI laws.

The most politically significant piece is the preemption push. The administration wants Congress to

step in and override the growing patchwork of state AI laws arguing hat a fragmented state-level

approach creates unnecessary barriers for U.S. companies competing globally. It doesn’t want 50

different state AI frameworks. It wants one federal one.

There’s a catch, though. The Framework is not legislation. It’s a set of recommendations. Congress

still needs to act. And Congress moves slowly.

The State Law Explosion

While federal action stalls, states are moving fast.

A record 150 AI-related bills were passed by U.S. state legislatures in 2025 alone. On January

1, 2026, multiple state laws came into force simultaneously:

California enacted several laws including the Generative AI Training Data Transparency Act

(requiring public-use AI developers to publish information about their training data), the Companion

Chatbots Act (mandating chatbot disclosures and safety protocols around harmful content,

particularly for minors), and a Healthcare AI Act prohibiting AI from falsely claiming healthcare

licenses when communicating with patients.

Texas implemented the Responsible Artificial Intelligence Governance Act (RAIGA), placing

requirements on AI developers and deployers operating in the state.

New York passed the RAISE Act, requiring AI companies to publish safety protocols and report

critical safety incidents.

Colorado’s AI Act is currently slated to come into force on June 30, 2026, requiring reasonable

care to avoid algorithmic discrimination, risk management policies, impact assessments, and

disclosure requirements.

38 states in total passed AI legislation in 2025 covering topics including AI in elections,

deepfakes, healthcare AI disclosure, and algorithmic transparency.

The Trump administration’s December 2025 Executive Order threw a wrench into all of this by

signaling federal intent to challenge state laws viewed as inconsistent with federal policy. An AI

Litigation Task Force was established to identify and potentially challenge state laws. But the legal

reality is that executive orders cannot directly preempt state law only Congress can do that. So

the state laws are technically in force, legally contested, and practically creating compliance

complexity for companies operating nationally.

The Copyright Fight

One particularly heated battleground that the Framework touches on: AI training data and copyright.

The administration takes the position that “training AI models on copyrighted material does not

violate copyright laws” but acknowledges that arguments to the contrary exist and supports

letting courts resolve it. Multiple lawsuits from authors, publishers, news organizations, and

musicians against AI companies are working their way through courts. The Framework’s intellectual

property section essentially signals: the executive branch is not going to resolve this for you. Watch

the courts.

China: A System Built for Control

China’s approach to AI regulation is structurally different from both the EU and U.S. and

understanding it is increasingly important for any company operating in or competing with Chinese

markets.

China has three major AI regulations already in force: the Algorithm Recommendations Regulation

(2022), the Deep Synthesis (Deepfake) Regulation (2022), and the Generative AI Services

Regulation (2023). Together, these cover a wide range of AI applications.

The key features:

- AI content must comply with laws and Chinese socialist values

- AI-generated content must be labeled

- Providers must conduct security assessments before launching services with “public opinion

attributes or social mobilization capabilities” a category that broadly covers chatbots, virtual

assistants, content recommendation systems, and social platforms - Algorithms must be filed with the Cyberspace Administration of China

- Providers of generative AI services must obtain government approval before launching

China is not building an independent AI rights framework. It’s building a system where AI serves

state interests and operates under state oversight. That’s a fundamentally different governance

philosophy and it directly shapes how Chinese AI companies operate globally and how foreign

companies navigate the Chinese market.

China’s next five-year plan will be published in 2026, and it is expected to contain significant signals

about the future direction of Chinese AI investment, regulation, and international strategy.

What’s Actually New Right Now: The Latest Developments

Stanford’s AI Index: Technology Is Outrunning Governance

The 2026 AI Index from Stanford University, published in April 2026, contains a finding that cuts to

the heart of the regulation debate: regulation is running behind the technology because we don’t

really understand how it works.

The same report notes that U.S. state legislatures passed a record 150 AI-related bills in 2025. The

EU AI Act’s first prohibitions are now in force. Japan, South Korea, and Italy all passed national AI

laws last year. And yet — the benchmarks designed to measure AI, the policies meant to govern it,

and the institutions tasked with enforcement are all struggling to keep pace with technology that

continues to improve at a rate that genuinely surprises the researchers building it.

70+ Countries Now Have AI Policies

According to OECD AI Policy Observatory data, as of early 2026, over 70 countries or economies

globally have issued at least one AI-related policy, strategy, or regulation.

The three broad camps: the EU-led “risk-based hard law” model, the U.S.-led “industry

self-regulation supplemented by state laws” model, and the Japan/Singapore-led “soft governance

with industry guidelines” model.

South Korea became the first Asia-Pacific country to pass comprehensive AI legislation when its AI

Basic Act took effect in January 2026. The UK, despite being the world’s third-largest AI market, still

has no AI-specific regulations it’s taking a sector-by-sector approach through existing regulators.

The Bipartisan Child Safety Priority

One area where U.S. political agreement exists across party lines: protecting children from AI

harms.

The White House Framework prioritizes child safety explicitly, calling for age verification

requirements, parental control tools, protections against sexual exploitation, and safeguards for

mental health and self-harm risks. Congress is actively pursuing the bipartisan GUARD Act and the

Youth AI Privacy Act.

This isn’t just U.S. policy. California’s Companion Chatbots Act requires chatbot disclosures and

safety protocols around self-harm content specifically for minors. The EU AI Act includes

child-specific carve-outs and protections throughout. Multiple countries are moving on child AI

safety simultaneously.

For any company building consumer-facing AI especially products that could be accessed by

young people child safety compliance is now a global regulatory priority, not an optional feature.

The Compliance Reality for Companies

Let’s be concrete about what all of this means for businesses.

If you build or deploy AI, here’s where the compliance pressure is actually coming from right now:

EU high-risk systems (deadline: August 2, 2026):

If your AI touches hiring, credit, healthcare, law enforcement, or critical infrastructure and it

operates in the EU or affects EU users you are in scope. You need: risk management

documentation, conformity assessments, EU database registration, CE marking, and human

oversight mechanisms. Non-compliance risks fines up to €35M or 7% of global revenue.

California (effective January 1, 2026):

Training data transparency if you build public-use generative AI. Healthcare disclosure rules if your

AI communicates with patients. Chatbot disclosure and safety requirements if you build companion

or consumer-facing AI. Consumer ADMT (automated decision-making technology) rights applying

from 2027.

Colorado (effective June 30, 2026):

Algorithmic discrimination safeguards, risk management policies, impact assessments, and

consumer notices if you develop or deploy AI in significant consumer-facing decisions.

New York:

Safety protocol publication and incident reporting requirements under the RAISE Act.

Everywhere:

Child safety is now a universal compliance priority. Deepfake and AI content labeling requirements

are proliferating. Security expectations around AI systems are increasing, with cyber insurance

carriers now conditioning coverage on AI-specific security controls and red-teaming documentation.

Five Things That Define the AI Regulation Debate in 2026

1. Innovation vs. protection and nobody has solved the tension.

Every major regulatory framework is trying to prevent harm without killing the technology. The EU

errs toward protection. The U.S. under Trump errs toward innovation. Neither has figured out how

to fully do both simultaneously.

2. Federal preemption in the U.S. is a political fight, not a done deal.

The Trump administration wants it. Democrats are skeptical. Congress is slow. State laws keep

coming. Companies can’t just wait for a federal resolution that may not arrive in time.

3. The copyright question will be decided by courts, not legislators.

Governments on both sides of the Atlantic are stepping back from directly resolving whether AI

training on copyrighted material is lawful. That fight is playing out in courtrooms, and the outcomes

will shape the AI industry’s legal foundation.

4. Child safety is the rare consensus issue.

Across virtually every jurisdiction, protecting minors from AI harms exploitation, manipulation,

self-harm, identity fraud has achieved something close to bipartisan and cross-border

agreement. If you build consumer AI, this is the compliance area to prioritize first.

5. Enforcement is coming even if legislation isn’t finished.

The EU is not waiting. Fines can now be issued. The U.S. FTC and state attorneys general are

active. Security regulators are asking harder questions. The era when “AI is still new, so

enforcement is light” is ending.

What This Means for Different Audiences

If you’re building AI:

The regulatory environment is fragmenting. You cannot design one product for a global market and

assume it’s compliant everywhere. You need a jurisdiction-by-jurisdiction compliance map, and you

need it now — not when the enforcement notice arrives.

If you’re buying AI:

Your AI vendors’ compliance status is now your compliance risk. If you deploy a non-compliant

high-risk AI system in the EU, you are a deployer under the AI Act, and deployer obligations apply

to you. Start asking vendors for their compliance documentation.

If you’re investing:

AI governance failures are becoming material business risks not just reputational ones.

Companies that have invested in compliance infrastructure will have a competitive advantage in

regulated markets. Companies that haven’t are carrying unpriced risk.

If you’re a consumer:

The laws being passed right now are, in many cases, specifically about you. Transparency rights,

opt-out rights, rights to challenge automated decisions about your credit, job application, or

insurance these are real protections being built into law across multiple jurisdictions. They’re not

perfect yet. But they’re arriving.

Key Facts at a Glance

| What | Detail |

|---|---|

| EU AI Act high-risk deadline | August 2, 2026 |

| EU max fines | Up to €35M or 7% global revenue |

| U.S. state AI bills (2025) | 150+ passed record high |

| Countries with AI policies (2026) | 70+ globally (OECD data) |

| White House Framework released | March 20, 2026 |

| Colorado AI Act effective | June 30, 2026 |

| South Korea AI Basic Act effective | January 29, 2026 |

| Digital Omnibus delay possibility | Would push Annex III to Dec 2027 |

| California laws effective | January 1, 2026 (multiple acts) |

Stanford AI Index conclusion, Regulation is running behind the technology

What to Watch for the Rest of 2026

The EU high-risk enforcement moment (August 2, 2026): This is the date the EU’s compliance

requirements shift from preparation to enforcement for most enterprises. Watch for the first formal

enforcement actions in the months that follow.

U.S. federal legislation attempts: Will Congress move on a federal AI bill? The TRUMP

AMERICA AI Act is a 291-page draft that would codify Trump administration priorities and constrain

state authority. Watch whether it gains bipartisan traction or stalls.

Colorado AI Act fate: The law is scheduled for June 2026 but was expected to be debated and

possibly changed during this legislative session. Watch whether it passes as written, is amended,

or is delayed again.

State vs. federal legal battles: The AI Litigation Task Force is designed to challenge state AI

laws that conflict with federal priorities. The first actual legal challenges will define the boundaries of

federal preemption and potentially reshape the U.S. regulatory landscape.

China’s five-year plan: Publishing in 2026, it will signal the direction of Chinese AI investment,

domestic regulation, and international strategy for the next decade.

Copyright cases: Multiple major lawsuits against AI companies for training data copyright

infringement are progressing through courts. A significant ruling — in either direction — will reshape

the legal foundation of the AI industry.

The Bottom Line

Here’s what the AI regulation story of 2026 really is:

It’s not a story about governments killing innovation.

It’s not a story about tech companies running wild without rules.

It’s a story about the world’s most powerful technology arriving faster than human institutions know

how to manage it — and everyone trying to figure out the right answer at the same time, with

incomplete information, in real time.

The EU has chosen: better to act now, even imperfectly, than to wait.

The U.S. has chosen: better to innovate first and regulate specifically than to impose broad

requirements on a technology still being defined.

China has chosen: AI in service of the state, with state oversight embedded from the start.

None of these approaches is entirely right. None is entirely wrong. But all three are shaping the AI

products and services you’ll use in the years ahead and the rules your company has to follow to

reach global markets.

The window to get ahead of this is narrowing.